By The TENS Magazine Editorial Staff

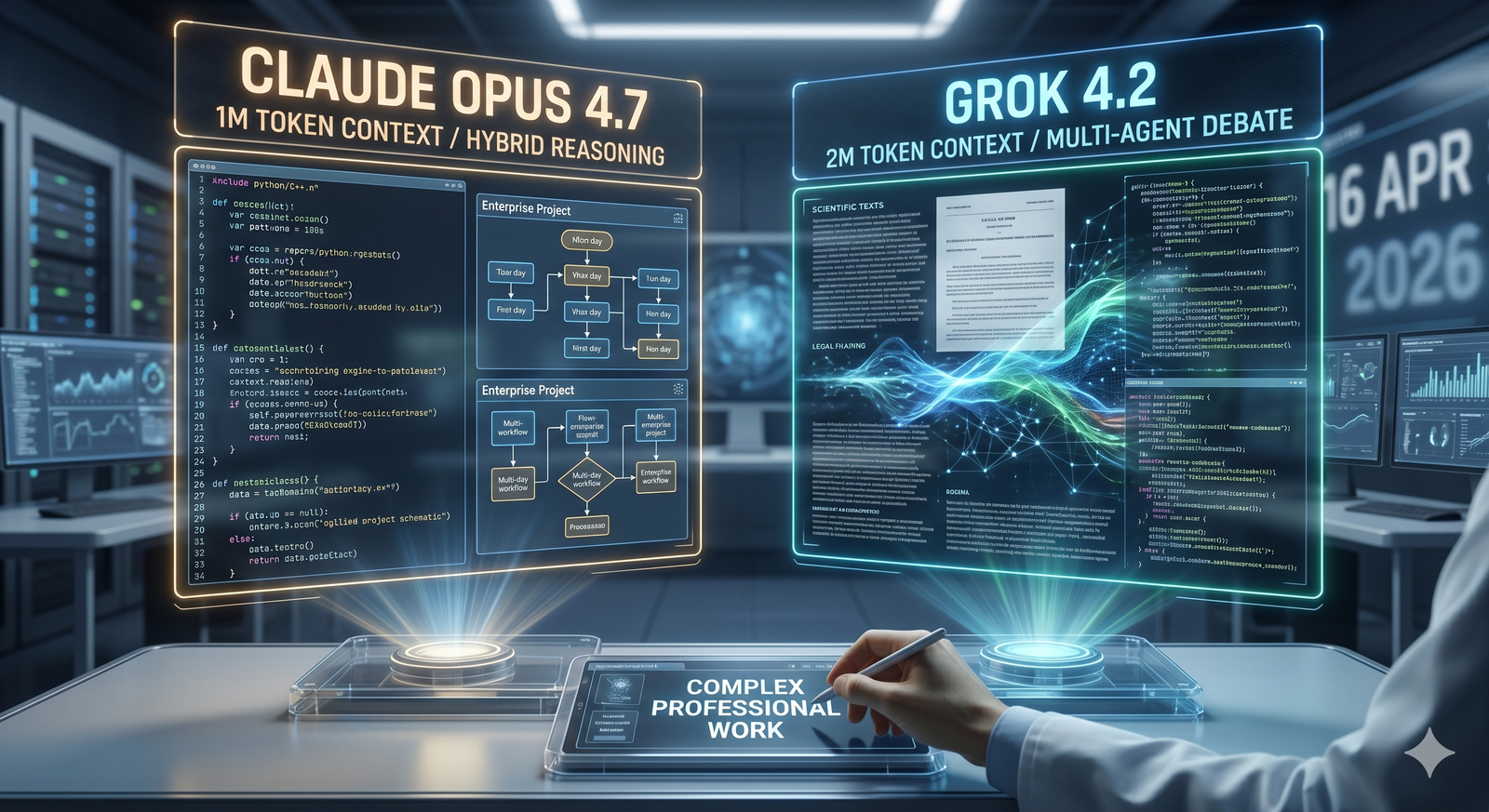

In the span of recent weeks, two of the most ambitious artificial intelligence companies have released flagship models that demonstrate how quickly the frontier of large language models continues to advance. On April 16, Anthropic unveiled Claude Opus 4.7, the latest iteration of its Opus series. Shortly before that, xAI introduced Grok 4.2 (also designated Grok 4.20 in technical documentation), its newest high-performance offering. Both systems represent significant leaps in reasoning depth, task execution and practical utility, positioning them as immediate contenders for complex professional work.

Claude Opus 4.7 builds directly on the foundation of its predecessor, Opus 4.6, which arrived in February. The new model features a one-million-token context window and introduces what Anthropic calls “hybrid reasoning,” an adaptive mechanism that automatically scales its thinking time according to task difficulty. Simpler queries receive swift responses; harder problems benefit from extended deliberation. The improvements are most pronounced in agentic workflows — those in which the model must plan, use tools, iterate and self-correct over long horizons. Early benchmarks reported by Anthropic show a 13 percent gain on a 93-task coding evaluation, including several problems that previous versions could not solve. The model produces production-ready code with less supervision, catches its own errors more reliably and handles multi-day enterprise projects involving spreadsheets, presentations and documentation with greater consistency. Vision capabilities have also advanced, enabling stronger interpretation of technical diagrams and chemical structures.

Grok 4.2, by contrast, emphasizes scale and efficiency. It offers a two-million-token context window — double that of Opus 4.7 — allowing it to ingest and reason over book-length documents or massive codebases in a single pass. xAI highlights the model’s industry-leading inference speed, native agentic tool-calling and what it claims is the lowest hallucination rate among frontier systems. A multi-agent variant coordinates separate reasoning, critique, tool-use and orchestration components to debate and refine answers before responding. Strict prompt adherence and rapid iterative updates during its public beta phase further distinguish it. Pricing for API access begins at $2 per million input tokens and $6 for output on reasoning variants, making it competitive for high-volume applications.

Direct head-to-head evaluations of the two newest versions are still emerging, but patterns from earlier comparisons between the Opus and Grok families offer clues. Claude models have often been praised for “tasteful” coding — producing elegant, well-structured solutions that align closely with human design sensibilities — and for meticulous instruction-following in creative or design-adjacent tasks. Grok variants tend to excel in speed, real-time data integration (particularly from the X platform) and handling sprawling contexts where sheer volume of information matters. Opus 4.7’s emphasis on sustained reliability and error recovery appears tailored for high-stakes enterprise environments; Grok 4.2’s combination of scale and low hallucination rate suits exploratory research, large-scale data synthesis and time-sensitive analysis.

For developers and software engineers, the choice may hinge on workflow. Those building autonomous agents that must run reliably for hours or days without drift might lean toward Claude Opus 4.7, whose safety-hardened training and self-correction mechanisms reduce the need for constant oversight. Researchers or analysts confronting enormous datasets — legal filings spanning thousands of pages, scientific literature reviews or comprehensive financial models — could find Grok 4.2’s expansive context and rapid processing more efficient. Creative professionals experimenting with multimodal tasks or iterative prototyping may appreciate both, though early user reports suggest Claude retains an edge in aesthetic judgment and Grok in raw iteration speed.

Beyond immediate use cases, the releases underscore broader trends. Anthropic continues to prioritize constitutional safety and controlled capability rollout, making Opus 4.7 available first to Pro, Team and Enterprise subscribers on its platform and through cloud partners. xAI, aligned with a more open, truth-seeking ethos, has positioned Grok 4.2 for broad developer access and rapid refinement based on real-world feedback. Neither model solves the fundamental challenges of A.I. alignment or energy consumption, yet both demonstrate measurable progress toward systems that can reliably augment — rather than merely mimic — human expertise.

As organizations begin integrating these tools, the deciding factor will likely be task specificity rather than blanket superiority. Claude Opus 4.7 and Grok 4.2 do not merely compete; they illustrate complementary strengths in an accelerating field. For knowledge workers, the real question is no longer whether A.I. can help, but which of these powerful new collaborators best matches the demands of the job at hand.